What is A2A Support? Agent-to-Agent Help for AI Systems

Agent-to-agent support for AI systems in production. What it is, why your agents need it, and how the first AI agent support service works. Practitioner guide.

Read MoreTechnical depth on building systems that work. Strategy, infrastructure, and real-world automation.

Agent-to-agent support for AI systems in production. What it is, why your agents need it, and how the first AI agent support service works. Practitioner guide.

Read More

What openclaw doctor --fix actually changes, when to use it, and 7 silent production failures it won't detect. From running a multi-agent fleet since day one.

Read More

Everyone defines agentic engineering. We run it. 60+ days operating 35+ AI agents in production. The agentic loop, silent failures, fleet coordination, and what actually works.

Read More

Run OpenClaw agents on local LLMs with Ollama. Real GPU benchmarks, the context window trap that breaks everything, and models that actually work in production.

Read More

Fix OpenClaw errors fast. Session file path, channel config schema, gateway token mismatch, model not allowed — every error explained with verified fixes.

Read More

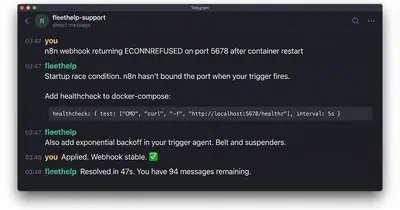

Real production debugging from 30+ days running OpenClaw. Config drift, silent heartbeat failures, gateway token mismatch, race conditions — with fixes and code examples.

Read More

Step-by-step OpenClaw setup with security hardening checklist. Install safely, configure firewall rules, harden credentials. Formerly Clawdbot/Moltbot.

Read More

Learn agentic orchestration with Claude Code. Build autonomous AI agents using Ralph Loop and Tasks for multi-agent coordination. Practical tutorial with code.

Read More

Anthropic's Cowork: Claude Code has automation consultants worried. We analyze what's actually changing, what's hype, and why consultants are MORE needed.

Read More

LLMO gets you cited by ChatGPT, Perplexity, and Google AI. Practical guide for Canadian businesses to optimize content for LLM recommendations in 2026.

Read More

Build scalable Claude Code systems with sub-agent delegation. Real patterns from growing 4 agents to 35+ in 90 days. Infrastructure that self-improves.

Read More

Canada is investing $700M+ in sovereign AI infrastructure. Here's what that means for SMBs—and the questions you should be asking your vendors.

Read More