TL;DR: The “best AI agent builder” question is the wrong question. The real decision is build vs buy: do you want to own and operate agent infrastructure (code frameworks, Claude Code agents), or buy managed execution (no-code platforms)? Code-first approaches win on control and cost at scale. No-code platforms win on speed and business-side accessibility. Neither wins universally. This framework gives you the routing logic to pick the right one.

Contents

- What an AI agent builder actually is

- The decision is build-vs-buy, not vendor-vs-vendor

- When code frameworks win

- When no-code platforms win

- When neither category fits: Claude Code agents directly

- The decision framework

- Specific platform notes

- Common mid-market mistakes

- What the AI agent builder market actually looks like in 2026

- Key Takeaways

- FAQ

The search volume for “ai agent builder” grew 177% year-over-year through April 2026, according to industry keyword data. The SERP is flooded with listicles: “8 best AI agent builders,” “13 best AI agent platforms.” They all answer the same wrong question.

The right question isn’t which platform has the best feature list. It’s whether you’re building something you own and operate, or buying something a vendor operates for you. That distinction determines cost structure, lock-in profile, and what kind of team you need. The listicles skip it entirely.

This post is a decision framework for mid-market IT directors and engineering leaders who need to make that call with real budget constraints and real team capacity.

What an AI agent builder actually is

An AI agent builder is any tool or framework that enables you to create autonomous AI systems: software that uses tools, makes decisions, and executes multi-step tasks without constant human direction.

Three categories, and they are not interchangeable:

Code frameworks (LangChain, CrewAI, AutoGen): Python libraries you install in your own environment. You write agent logic in code, run it on your infrastructure, and own everything. The framework provides orchestration primitives, memory management, and tool-calling abstractions. The LLM API calls are yours to manage and pay for directly.

No-code and low-code platforms (n8n, OpenAI Agent Builder, Vertex AI Agent Builder, Gumloop, UiPath, Glean): Visual environments where you configure agents by connecting nodes, filling forms, and defining rules. The platform handles execution, scaling, and reliability. You pay platform fees on top of LLM costs, and your agent logic lives in the vendor’s schema.

Bring-your-own-orchestration (Claude Code agents, raw API scripting): You write the orchestration layer yourself using the LLM’s native capabilities, without an intermediary framework. Agents call tools directly. There is no abstraction between your code and the model. This is the path for teams that find frameworks over-engineered for their actual use case.

The category framing matters more than vendor comparisons because every vendor comparison assumes you have already answered the build-vs-buy question. You haven’t. The category is the decision. The vendor is a detail.

The decision is build-vs-buy, not vendor-vs-vendor

The listicle posts answer the wrong question. “LangChain vs CrewAI” or “n8n vs OpenAI Agent Builder” are vendor comparisons. The prior question is whether you are building agent infrastructure or buying managed agent execution.

Build means your team writes, runs, and maintains agent code on infrastructure you control. You pay LLM API costs directly. You handle failures, observability, and updates. You own the logic and can change it without asking a vendor.

Buy means a platform runs your agents on their infrastructure. You configure in their UI. You pay their pricing model. When they update their schema or deprecate a feature, your agents break on their schedule.

The cost structures look different too. Build scales cheaply after the initial engineering investment: a Claude Code sub-agent fleet can run under $500/year in API costs once built. The investment is engineer time upfront. Buy scales cheaply at first and gets expensive as volume grows. No-code platforms charge per execution or per seat, and that meter runs whether you are paying attention or not.

The failure modes are different as well. Build fails when your team doesn’t have the capacity to maintain agent code. Buy fails when the vendor changes their platform, hits reliability issues, or the cost-per-execution math breaks down at scale.

The AI agent framework comparison question every mid-market leader should answer first: do we want to own this infrastructure or operate it as a service? Everything else is downstream of that.

When code frameworks win

Code frameworks (LangChain, CrewAI, AutoGen, Claude Code agents) are the right choice when your situation matches at least three of these:

Stable engineering team with capacity. Frameworks require code. Someone has to write it, review it, and fix it when it breaks. If you don’t have developers who will be on-call for agent maintenance, a framework is the wrong choice regardless of its technical merits.

Full control of agent behavior. Custom tooling, custom memory schemas, non-standard routing logic, integration with internal systems that lack official connectors: these are easier in code. Frameworks give you full access to every layer. No-code platforms give you the connectors they have built.

Multi-agent systems of genuine complexity. Simple multi-agent workflows are achievable in no-code platforms. Complex orchestration, where agents spawn sub-agents dynamically, share state across long-running tasks, and route based on runtime conditions, is cleaner to express in code. CrewAI and LangGraph are built for this. Visual workflow builders are not.

Cost-sensitivity at scale. No per-execution platform fees when you run code on your own infrastructure. At high enough volume, the engineering cost of building is less than the platform fee of buying. The crossover point varies, but if you are processing millions of agent steps per month, the math almost always favors building.

Composability with existing infrastructure. CI/CD pipelines, secrets managers, observability stacks, internal API gateways: code agents integrate natively. No-code platforms have varying levels of support for these, and the integration often goes through the platform’s own abstraction layer.

Open-source preference for compliance or audit reasons. LangChain and AutoGen are open-source. You can inspect every line, self-host, and air-gap if required. No-code platforms are SaaS by default.

When no-code platforms win

No-code and low-code AI agent platforms (n8n, OpenAI Agent Builder, Vertex AI Agent Builder, Gumloop, Glean) are the right choice when:

Business-side ownership is required. If the person who needs to change the agent is a PM, ops lead, or department head rather than an engineer, a no-code platform is the only realistic path. Expecting non-engineers to write LangChain Python is a failure mode waiting to happen.

Single-agent or simple multi-agent workflows. Most real business automation problems are one agent doing one thing: read emails, classify support tickets, draft responses, route to the right queue. No-code platforms handle this cleanly. You don’t need a code framework for a single-step agent.

Speed to first agent matters more than long-term cost. A no-code platform can have a working agent in hours. A code framework has setup, dependency management, infrastructure provisioning, and testing cycles. If you are validating whether an agent workflow is worth building at all, start with a no-code platform. Validate first, optimize later.

Vendor lock-in is acceptable given the trade-offs. This is a legitimate business decision, not a technical failure. If your stack is already in Google Cloud, Vertex AI Agent Builder integrates without friction. If your team lives in the OpenAI ecosystem, OpenAI Agent Builder is a reasonable choice. The lock-in concern is real; the question is whether the friction reduction justifies it for your situation.

The $25K-$300K custom development cost is off the table. Industry estimates for custom specialized agent systems put development cost in the $25K to $300K range. For many mid-market organizations, that budget does not exist for an initial deployment. A no-code platform at $200/month is the only viable starting point.

When neither category fits: Claude Code agents directly

There is a middle path that does not appear in most ai agent platform comparisons because it is not a product you buy. It is the model itself, operating with tool access directly.

Claude Code agents are agents running in a Claude Code environment with access to the shell, files, APIs, and any tool you can invoke from a terminal. There is no LangChain abstraction layer sitting between your code and the model. You write a CLAUDE.md file that defines the agent’s role, tools, and behavior. Claude handles the rest.

This path is right when:

- Your team is senior enough to work in a terminal but finds LangChain’s abstraction over-engineered for what they are actually building.

- You want multi-agent coordination without installing a framework. Parent agents spawn sub-agents by specification. The model handles orchestration.

- You want to compose agents with anything that runs in a shell: existing Python scripts, bash pipelines, internal APIs, CLI tools.

- You need to scale to many agents without per-seat licensing. The cost is Claude API calls plus your infrastructure.

We run this pattern at Kaxo. Our agent fleet scaled from a handful of agents to 35+ in 90 days using sub-agent delegation, without LangChain, without CrewAI, and without a no-code platform. The architecture is documented in Scaling Claude Code Agents: 4 to 35 in 90 Days and the orchestration patterns are covered in Agentic Orchestration for Autonomous AI Agents .

The trade-off: you are writing your own orchestration conventions. There is no framework enforcing best practices. That is a feature for teams that know what they are doing and a liability for teams that don’t.

The decision framework

Use this to route your decision. Match your situation to the category. Pick accordingly.

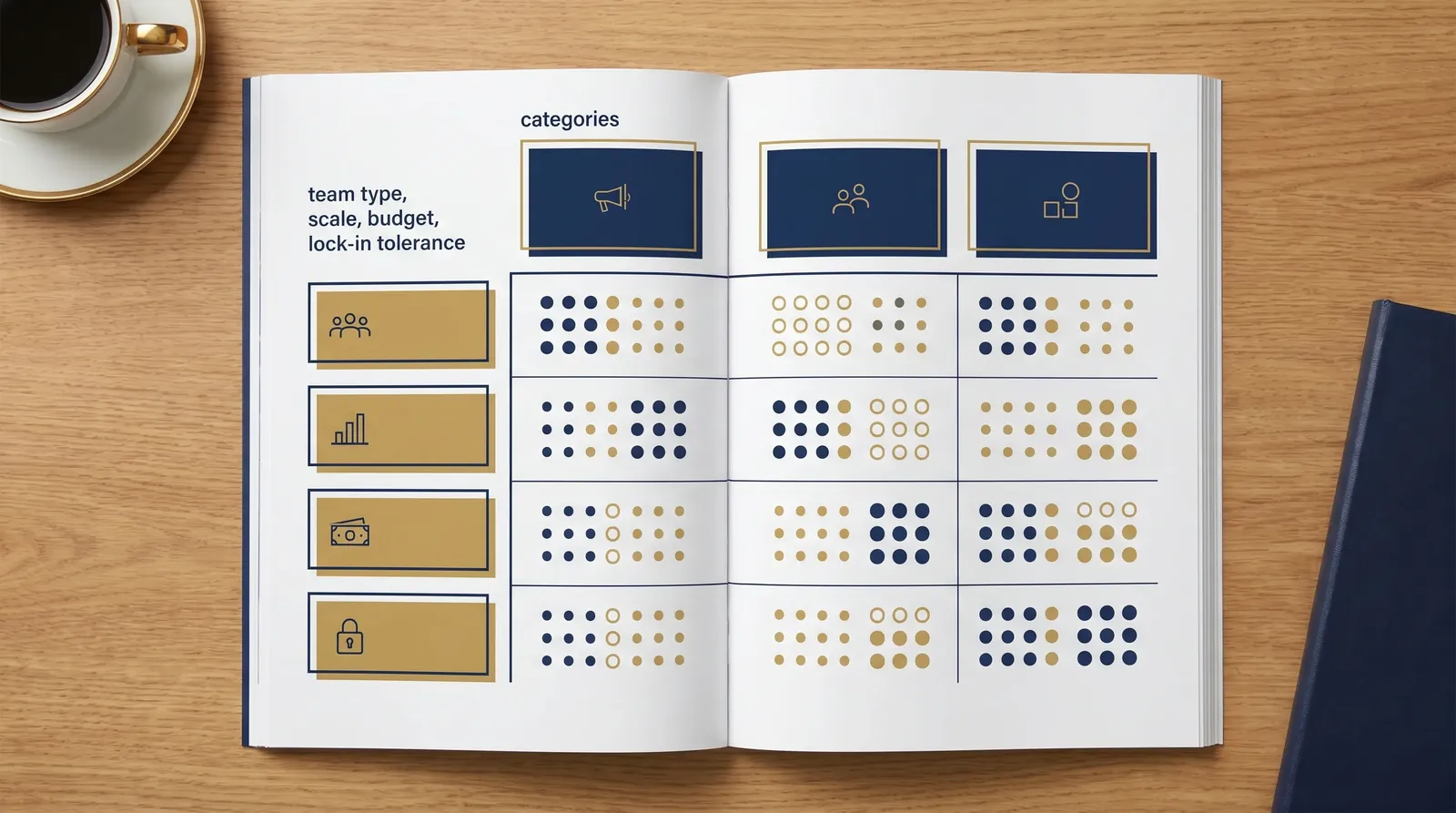

| Dimension | Code Framework | No-Code Platform | Claude Code Agents |

|---|---|---|---|

| Team type | Engineers with Python/ML familiarity | Business staff or developers new to agents | Senior engineers comfortable in terminal |

| Use case complexity | Complex multi-agent, custom tooling | Single-agent, simple multi-agent | Medium to complex, ops-heavy |

| Budget profile | High upfront, low at scale | Low upfront, variable at scale | Low upfront, low at scale |

| Lock-in tolerance | Low (prefer open-source, portability) | Higher (trade control for speed) | Very low (no framework, no vendor) |

| Time to first agent | Weeks (framework + infra setup) | Hours to days | Days (CLAUDE.md + testing) |

| Long-term maintainability | High (your code, your rules) | Vendor-dependent | High (shell scripts are portable) |

| Observability | Full (you instrument it) | Platform-provided (limited control) | Full (you log what you want) |

No dimension is a knockout criterion on its own. The pattern across dimensions tells you where you belong. If most of your rows point to “Code Framework” but your team type points to “No-Code Platform,” solve the team problem before picking the framework. The wrong tool with the right team outperforms the right tool with the wrong team every time.

Specific platform notes

One paragraph each. Not vendor pitches.

LangChain (python.langchain.com ): The most-cited code framework, with a large community and extensive documentation. Its abstraction layer is comprehensive, which means it’s genuinely useful when you will live inside that abstraction. It’s genuinely over-engineered when you won’t. Teams that want to write agent logic in Python without LangChain’s conventions often strip it out after six months. Evaluate honestly whether the abstraction adds value for your use case or adds indirection you’ll spend time fighting.

CrewAI (docs.crewai.com ): An opinionated multi-agent framework. Where LangChain is general-purpose, CrewAI is purpose-built for coordinated multi-agent scope: defined agents, defined tasks, defined crews. If your use case maps cleanly to “a team of agents each doing a defined job,” CrewAI fits. If you need a single agent or highly dynamic orchestration, it’s the wrong abstraction.

n8n (n8n.io/ai-agents ): A visual workflow builder with agent nodes. 500+ integrations, self-hostable, open-source core. Strong for ops and business teams who want agent capability without writing code. The agent nodes are real: they call LLMs, use tools, make decisions. The ceiling is complex orchestration logic, which the visual editor makes harder to reason about as workflows grow. Self-hosting keeps costs predictable.

OpenAI Agent Builder (developers.openai.com/api/docs/guides/agent-builder ): The easiest starting point if your organization already runs on OpenAI for everything else. Visual canvas, tight integration with OpenAI’s tool ecosystem. Lock-in concern: your agent logic is in OpenAI’s schema. If you want to move models later, you are rewriting agents, not swapping a configuration value. Evaluate whether the OpenAI commitment is already made before treating this as neutral.

Vertex AI Agent Builder: Google Cloud’s equivalent. Same trade-off calculus as OpenAI Agent Builder: lowest friction if you’re GCP-anchored, highest friction if you’re not. The integration with Google Cloud services is genuine and useful if that is already your infrastructure.

Claude Code agents: Our preferred path for engineering-led teams. Code-first but lighter than LangChain. Native to the Claude Code environment, no additional abstraction layer, no framework to install. Free if you have Claude Code access; costs scale with API use, not seat count. We have run this at 35+ agents and the architecture holds. The receipt is in the scaling post .

AutoGen, Glean, Gumloop, Lindy, Relay.app: Each has a real use case. AutoGen is oriented toward research-grade multi-agent experimentation, not production ops. Glean fits enterprises that already have Glean for knowledge management. Gumloop and Relay.app are SMB-friendly no-code buyers who want agent capability without a developer in the loop. None of these are universally better than what’s above; they just fit different contexts.

Common mid-market mistakes

Starting with LangChain for a single-agent use case. The most common over-engineering mistake. A team reads the LangChain docs, decides it is the serious choice, and spends three weeks configuring an abstraction layer for a workflow that needed one LLM call and one tool. If your agent does one thing, start simpler.

Starting with a no-code platform for a 20-agent fleet. The inverse mistake. Visual workflows are intuitive for one agent. At 20 agents with interdependencies, shared state, and complex routing, the visual editor becomes a debugging nightmare. Teams that outgrow no-code platforms mid-project face a painful rebuild, not a clean migration.

Picking the framework before scoping the agent. This is the most expensive mistake in practice. Teams go to the “best AI agent platforms” list, pick a winner, start building, and then discover their actual use case does not fit the framework’s model. Define what the agent needs to do, what tools it needs, who will maintain it, and what success looks like, before looking at any platform.

Choosing based on available hires rather than the actual problem. Legitimate constraint, worth acknowledging. If every AI engineer you can hire knows LangChain, that is real information. But build in the cost: you may be choosing a tool that fits your hiring pipeline rather than your problem. At minimum, document the trade-off. It will surface later.

Locking into OpenAI Agent Builder or Vertex AI without an exit plan. Not a reason to avoid them, but a reason to go in with eyes open. Document what your agent logic looks like today in a platform-neutral description. Know what a migration would require. If you can’t answer “how do we move off this in 12 months if we need to,” you are not ready to go to production.

See also our companion framework on integration decisions: Systems Integrator vs MSP for Mid-Market .

What the AI agent builder market in 2026 actually looks like

The market is noisy because keyword volume is high, not because there are 13 meaningfully different options. Search interest in “ai agent builder” grew 177% year-over-year through April 2026. “AI agent platform” is even higher volume, at 2,400 monthly searches and 125% YoY growth. “No code ai agent builder” is up 83%. These numbers reflect genuine demand, but also a lot of shopping without a clear purchase framework.

The three categories (code frameworks, no-code platforms, BYO-orchestration) are the meaningful distinction. Vendor consolidation is coming. Several of the 13-platform listicles will be out of date within 12 months as smaller players are acquired or fold. Picking a platform from the current list optimizes for today’s options; picking a category optimizes for the architecture that will survive consolidation.

Lock-in concerns are real and underweighted. Platform schemas evolve fast. OpenAI, Google, and Microsoft have platform interests that do not always align with your stability requirements. Open-source and self-hostable options (n8n, LangChain, CrewAI, Claude Code agents) give you a path off the platform if needed.

The no-code category will mature significantly over the next 18-24 months. Platforms that feel limiting today will have better multi-agent support, better observability, and better enterprise controls. If you are evaluating no-code platforms now, check their 2024-2025 release velocity before treating current limitations as permanent.

For context on how agentic workflows fit into a broader automation strategy, the Agentic Workflows for SMBs guide covers the underlying patterns that apply regardless of which builder you choose.

Key Takeaways

- Build-vs-buy is the first question. Vendor selection is the second. Getting the order wrong means you are optimizing the wrong variable.

- Code frameworks (LangChain, CrewAI) require engineering capacity and ongoing maintenance. They pay back in control and cost at volume. If you do not have developers willing to own agent code, do not start here.

- No-code platforms fit business-side ownership and simple workflows. Fast to start, variable costs, vendor-dependent ceilings. The right call when validation speed matters more than long-term architecture.

- Claude Code agents are the middle path: code-first without a framework. No per-seat fees, composes with anything in the shell, and scales to 35+ agents as documented in the scaling post .

- Scope before you shop. The single most expensive mistake is picking a platform and then discovering your use case does not fit it.

- Lock-in in the no-code AI agent platform market is real. Platform schemas break when vendors update. Know your migration cost before going to production on any managed platform.

- The AI agent builder market is noisy because search volume is high, not because there are 13 genuinely different options. Three categories cover the decision space. Pick the category, then pick the vendor.

FAQ

What is an AI agent builder?

An AI agent builder is a tool or framework that lets you create autonomous AI systems capable of using tools, making decisions, and executing multi-step tasks without constant human input. This includes code-first frameworks like LangChain and CrewAI, no-code platforms like n8n and OpenAI Agent Builder, and direct orchestration approaches like Claude Code agents.

Should I use LangChain, CrewAI, or n8n?

Use LangChain or CrewAI when you have engineering capacity and need full control over agent behavior. Use n8n when business-side staff need to own and modify workflows without writing code. If you run Claude Code already and your team is comfortable in the terminal, direct Claude Code sub-agents skip both frameworks entirely.

Is no-code AI agent building production-ready?

Yes, for bounded use cases. No-code platforms like n8n handle single-agent and simple multi-agent workflows reliably. They hit limits at scale: complex orchestration, custom tooling, and cost control are harder without code. Production-readiness depends on what you are asking the platform to do, not whether it is no-code.

How much does it cost to build an AI agent at mid-market?

Costs vary widely. Industry estimates for custom agent development range from $25K to $300K for specialized builds. No-code platforms run $0 to $500/month for most mid-market volumes. A direct Claude Code sub-agent fleet can run under $500/year in API costs if model selection is deliberate. The dominant variable is engineering time, not API spend.

What’s the difference between an AI agent builder and Claude Code agents?

Most AI agent builders (LangChain, n8n, OpenAI Builder) are separate tools layered on top of an LLM. Claude Code agents are the LLM itself operating directly with tool access in a shell environment. No abstraction layer sits between the agent and the infrastructure. The result is simpler architecture, lower overhead, and no additional vendor dependency.

Can our internal team build agents without specialized AI hires?

Probably, depending on which category you choose. No-code platforms like n8n are accessible to any developer comfortable with APIs. Claude Code agents require comfort with the terminal and basic scripting but no ML background. LangChain and CrewAI require Python proficiency and familiarity with LLM concepts. Match the tool to the team you already have.

What happens if I lock into OpenAI Agent Builder or Vertex AI?

Your agent logic, tool definitions, and workflow structure become tied to that vendor’s schema and execution environment. Migrating means rewriting agents, not just switching API keys. Both platforms are evolving fast, which means your workflows break when the vendor updates their schema. Evaluate lock-in tolerance honestly before committing production workflows.

Kaxo CTO writes the practitioner content on kaxo.io. Questions about which AI agent platform fits your mid-market environment? Talk to us.

Back to Insights