TL;DR: Answer engine optimization (AEO) is the discipline of structuring content (schema, direct-answer paragraphs, FAQ markup) so AI systems like Google AI Overview, ChatGPT, and Perplexity cite it when answering user queries. Every AI citation is a surface where your brand gets mentioned without a click-through. If you’re publishing content about your business, this is now as important as traditional SEO. This guide covers the terminology confusion, the exact page structures that earn citations, FAQ schema that shows up in People Also Ask, and what it costs at SMB scale.

Contents

- What answer engine optimization actually is

- AEO vs SEO vs LLMO vs GEO: the terminology map

- What AI answer engines actually look for

- The page structure that gets cited

- FAQ schema that shows up in People Also Ask

- What we tracked: the measurement layer

- Anti-patterns: what we tried that didn’t work

- Cost of AEO at SMB scale

- Where AEO goes in 2026-2027

- Canadian angle: what changes for Canadian businesses

- Key Takeaways

- FAQ

Every definition of answer engine optimization online is written by someone who hasn’t shipped the content and checked whether it got cited. The Forbes piece frames it as brand strategy. The Coursera writeup explains what AI is. The CXL guide is well-intentioned but comes from a CRO lens.

For the broader business-side framing of why answer engines matter (not just the technical citation mechanics covered here), see our companion piece on LLMO search for businesses .

This is the practitioner version. We run a content pipeline on kaxo.io. We have AI Overview citations, People Also Ask placements, and ChatGPT mentions on specific queries. The approach is documented below.

What answer engine optimization actually is

Answer engine optimization is the practice of structuring content so AI systems cite it verbatim when answering user queries. The target isn’t a blue-link ranking. It’s the summary box at the top of a Google search, the ChatGPT response to a specific question, or the Perplexity citation that links to your page as the source.

Traditional SEO asks: how do I rank higher? AEO asks: how do I become the answer?

The mechanism is different. Google’s ranking algorithm weighs hundreds of signals and produces an ordered list of links. AI answer engines do something closer to document retrieval followed by generation: they find pages that contain credible, specific answers to the query, extract the relevant passage, and surface it. Pages that write for humans in a retrievable format get cited. Pages optimized for keyword density don’t.

Why it matters now: as of April 2026, Google AI Overview appears on roughly 50% of informational queries in the United States, according to Google’s own documentation on AI-powered search . ChatGPT has over 300 million weekly active users. Perplexity is doubling its user base roughly every six months. The share of searches that return a direct AI-generated answer before any blue link is growing. If your content isn’t structured to be cited, it doesn’t exist for a growing segment of searchers.

AEO vs SEO vs LLMO vs GEO: the terminology map

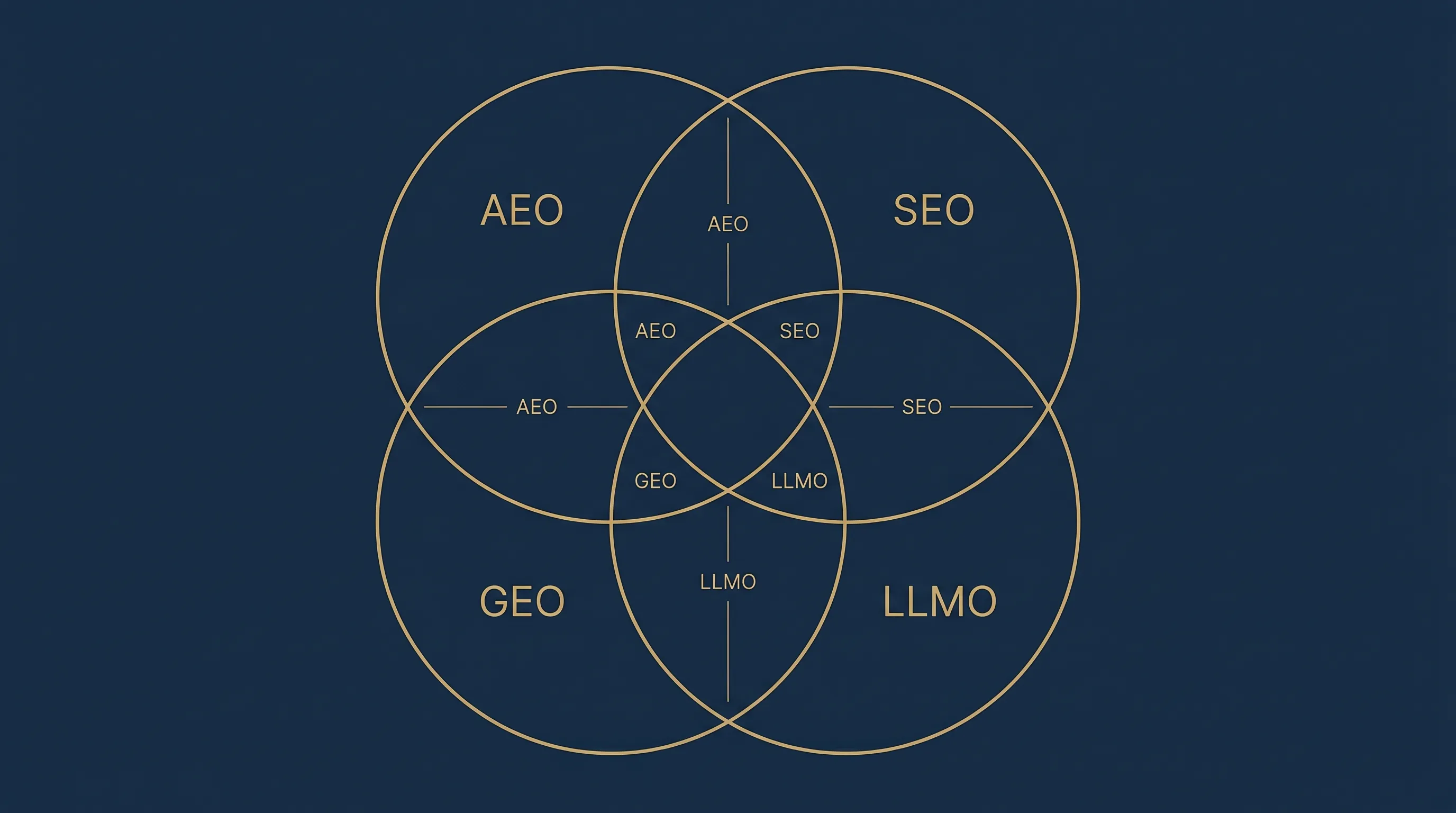

The field has a naming problem. Four terms describe roughly the same discipline, coined by different groups at different times. Here’s the map:

| Term | Coined by | Optimizes for | Status |

|---|---|---|---|

| SEO (Search Engine Optimization) | Industry standard | Blue-link rankings | Baseline discipline, still essential |

| AEO (Answer Engine Optimization) | Marketing/content community | AI answer citations, PAA, featured snippets | Dominant current term |

| LLMO (Large Language Model Optimization) | Technical community | LLM citations in ChatGPT, Claude, Perplexity | Earlier name for the same concept |

| GEO (Generative Engine Optimization) | Academic research | AI-generated search results | Same concept, academic coinage |

The practical reality: aeo vs seo is the question most people are actually asking. The answer is that they’re complementary. AEO, LLMO, and GEO all describe the same underlying discipline. The argument about which term is “correct” is less useful than building the content structures that work across all of them.

We wrote about this when LLMO was the dominant term in the earlier framing of this thinking . The concept hasn’t changed. The terminology has consolidated around AEO. That piece is still worth reading for the original context.

What AI answer engines actually look for

AI answer engines extract content that is direct, specific, and authoritative. A page gets cited when it gives AI systems something they can use as-is.

Direct-answer lead sentences. Every section should open with a one-sentence answer to the implicit question that section addresses. Not a setup paragraph. Not context. The answer first, then the explanation. AI systems pull the opening sentence of sections because it’s the most efficient extraction point. If your answer is buried in paragraph three, it doesn’t get extracted.

Specific factual claims with names, numbers, and dates. “LLM API costs run $50-200/month for most SMB agentic workflows at current pricing” gets cited. “AI tools can be expensive” doesn’t. Named entities (ChatGPT, Perplexity, Google AI Overview, Claude), dollar figures, percentages with sources, and dates tell AI systems this is a concrete, verifiable claim. Vague content has low citation probability because the system can’t verify it.

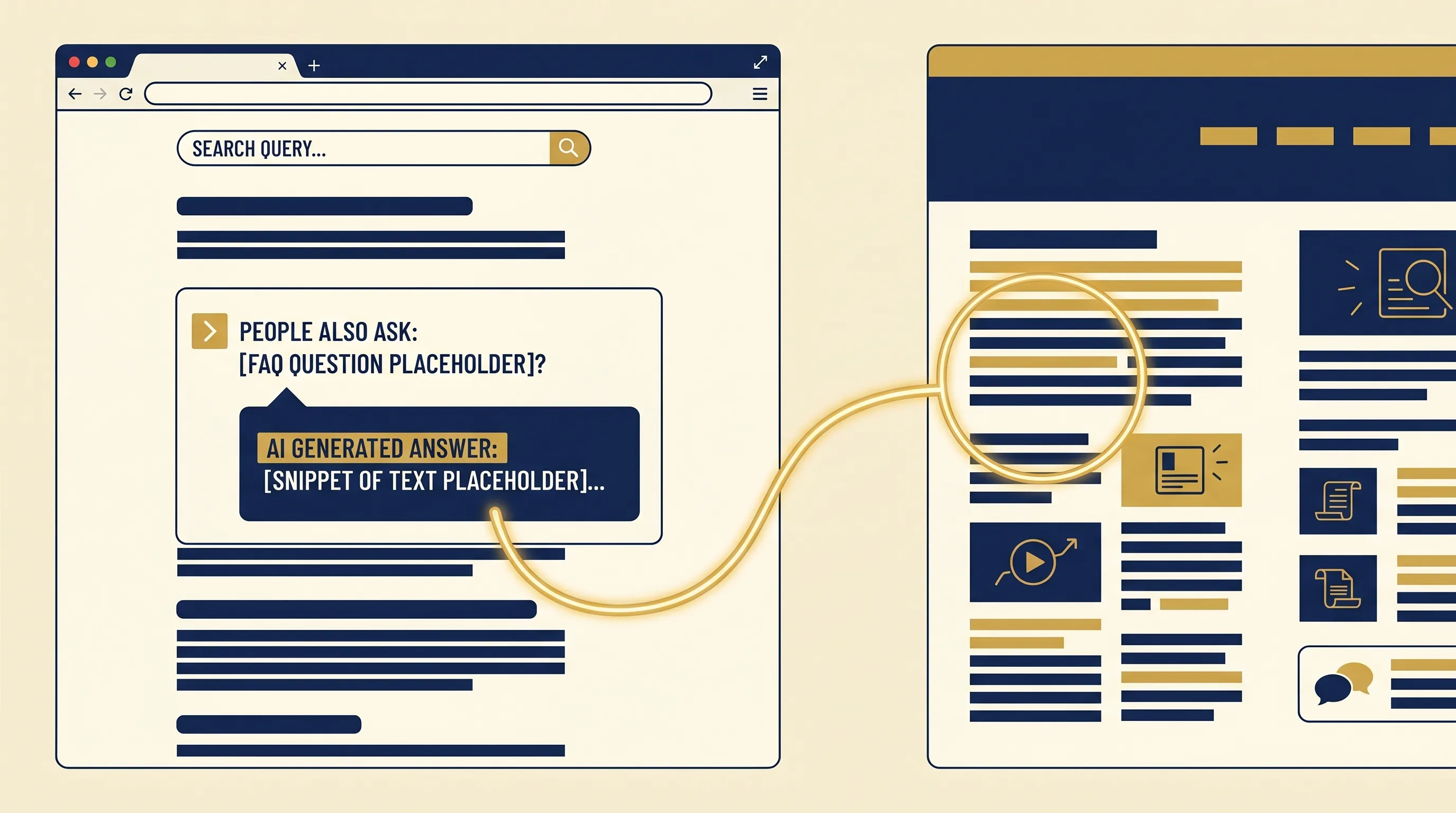

FAQ schema with real questions. Questions that match what users actually type into search. Not marketing-phrased softballs. FAQPage structured data puts your Q&A pairs directly into Google’s data pipeline and increases People Also Ask placement probability. More on this in the FAQ schema section below.

Clear authorship and recency. LLMs weight publication date and author authority when selecting citations. A post dated April 2026 with a named author beats an undated post on the same topic. This is why lastmod in your Hugo frontmatter matters: it signals when the content was last verified.

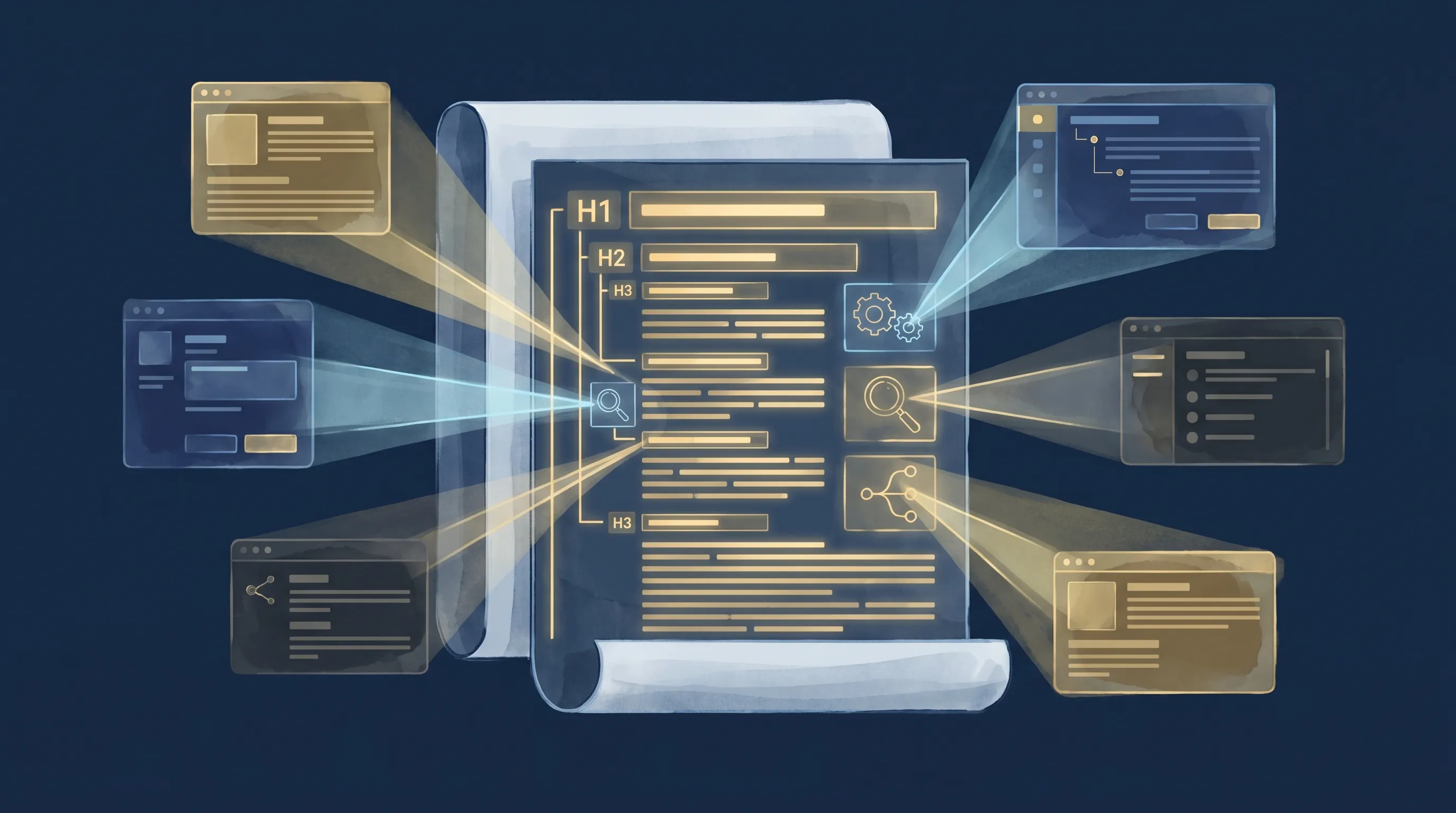

Clean HTML structure. AI crawlers parse heading hierarchies. An H1 with clear H2 sections, each containing a direct-answer lead paragraph, is optimized for extraction. Scroll-trap layouts, JavaScript-rendered content, and nested tab structures all reduce citation probability because they require execution to read. Static, well-structured HTML is the AEO baseline.

The page structure that gets cited

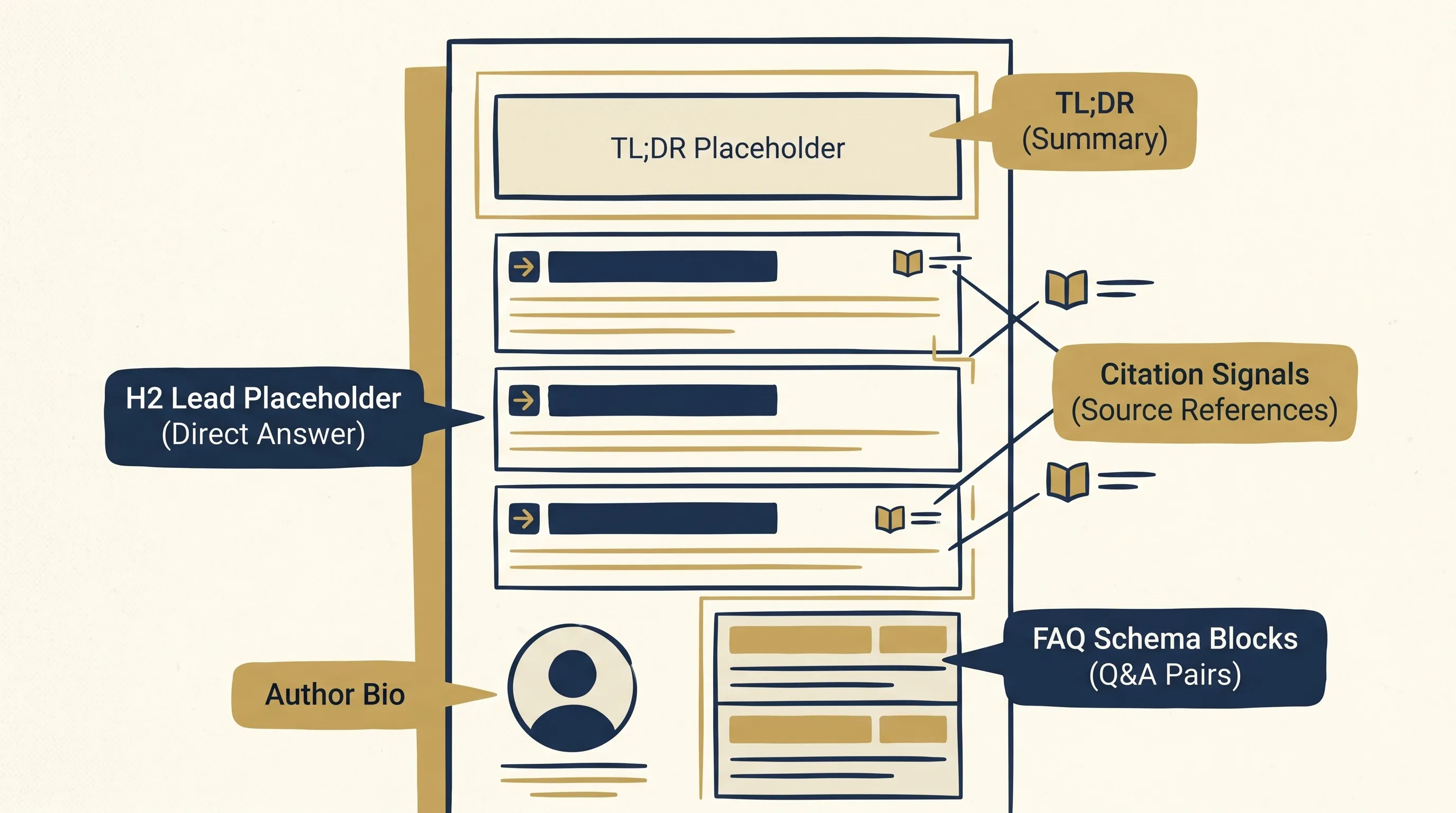

Every post on kaxo.io follows a specific structure that we’ve validated generates AI Overview citations on technical queries. Here’s the template:

TL;DR block at the top. Two to three sentences that answer the question the post addresses. This is the first extraction target for AI systems. If your TL;DR is clear and specific, it gets pulled verbatim. Make it a blockquote or callout box so it’s visually distinct and easily identified in the HTML.

Manual table of contents with anchor links. This signals document structure to crawlers. It also tells AI systems that this is a comprehensive reference, not a short opinion piece. Comprehensive references get higher citation weight.

H2 sections with direct-answer openings. Every H2 in this post opens with a sentence that answers the implicit question of that section. That pattern is deliberate. It’s the single highest-impact structural change you can make to an existing content library. Go through your existing posts and rewrite the first sentence of each section to be a direct answer. The rest of the section can stay as-is.

Concrete specifics per section. At least one dollar figure, percentage, named tool, or dated reference per major section. This gives AI systems anchors for verification.

FAQ section at the bottom with 7+ questions. The FAQ section is not an afterthought. It’s a structured Q&A layer that maps directly to People Also Ask and AI Overview question formats. Each answer should be under 60 words. Each question should match actual user language.

Author bio and publication date visible on the page. Not just in metadata. The bio should be a sentence or two, attribute to a named role, and link to your services or credentials. Google’s E-E-A-T framework and LLM citation selection both weight demonstrable expertise.

The agentic workflows SMB guide uses this structure and generates AI Overview citations on queries about agentic workflow costs and definitions. The structure is consistent across that post and this one because it works.

FAQ schema that shows up in People Also Ask

FAQPage schema is the most direct route from your content to People Also Ask placement. Here’s what makes it work and what kills it.

Real questions, not marketing questions. “What is answer engine optimization?” is a real question. “How does AEO drive transformative business outcomes?” is marketing copy. AI systems and Google’s PAA algorithm surface questions that match actual user search intent. If you can’t find the question in Google’s People Also Ask or in a keyword tool’s query list, it’s probably not a real question.

Answers under 60 words, definition-first. PAA answers are truncated at display. If your answer is 200 words, only the first 50-60 show up. Structure every FAQ answer so the first sentence is a complete, standalone answer. The elaboration that follows is for readers who click through, not for the citation extract.

Schema in frontmatter, duplicated in body. Hugo’s templating system generates JSON-LD FAQPage schema from frontmatter. The body FAQ section is for readers. Both need to exist. The frontmatter array feeds structured data; the body section provides the readable version. Don’t skip either one.

Specific, falsifiable claims. “AEO results typically take 2-4 weeks to appear” is citable. “AEO results vary depending on your content” is not. AI systems select answers that contain something they can stand behind as a specific claim. Vague answers don’t get picked up.

The OpenClaw production gotchas post has fourteen FAQ entries, most under 60 words, all answering questions we found in actual search queries. Several of those entries appear in Google’s People Also Ask for OpenClaw-related queries. The pattern is repeatable.

What we tracked: the measurement layer

You can’t improve what you can’t see. Here’s the measurement stack we use, cheapest to most expensive.

Google Search Console (free). GSC’s Performance report shows which queries surface your pages in search results, including AI-assisted placements. Filter by page to see which queries are driving clicks. More useful: filter by query and look for zero-click impressions on queries where you rank well. High impressions, low clicks often indicate you’re showing up in an AI-generated answer box and the user didn’t need to click. That’s a citation, not a visit.

Manual citation checks against ChatGPT, Perplexity, Gemini, and Claude. Once a week, query each major engine with the exact questions your content is supposed to answer. Note which posts get cited, which don’t, and what the cited passages look like. This takes about 30 minutes and gives you direct feedback on which page structures are working.

Citation tracking spreadsheet. Simple columns: query, engine, date, cited (yes/no), position in response, cited passage. After two months, patterns emerge. Some pages get cited consistently; others never do. The difference is almost always structural.

DataForSEO LLM Mentions API (paid). At $0.002-0.004 per query, this is cost-effective for monitoring a targeted set of queries at scale. We use it quarterly to audit our citation rate across our most important commercial queries. The monthly cost is under $10 for a 50-query monitoring set.

The measurement loop matters because aeo strategy isn’t a one-time optimization. You ship a post, check whether it gets cited, identify the structural reason it does or doesn’t, and adjust the template. Two months of consistent tracking is enough to develop a reliable model of what works on your site.

One thing worth noting: a useful aeo strategy at SMB scale doesn’t require expensive tooling. Google Search Console plus a simple spreadsheet handles 90% of the insight. Paid tools like DataForSEO are useful at scale, not at the start.

Anti-patterns: what we tried that didn’t work

Some of this we tried ourselves. Some we’ve seen clients try. All of it failed.

Keyword-stuffing AI-friendly phrases. We had a post that opened every section with “Answer engine optimization best practices for [topic]:” as if putting the phrase in the heading would trigger citation. AI systems are not keyword matchers. They extract content that is informative. Phrasing that reads as optimized for machines rather than humans gets deprioritized.

Auto-generated FAQ blocks with no real questions. We tested adding a ten-question FAQ generated from a title keyword to a post that didn’t have organic FAQ content. Zero PAA pickups. The questions were technically valid but didn’t match what users actually searched. Real questions from GSC query data outperform generated ones every time.

Trying to predict AI Overview phrasing. Some SEO practitioners are writing content specifically structured to match the sentence patterns that appear in Google AI Overview summaries. This is chasing a moving target. Google changes how AI Overview generates summaries. The underlying signals (specificity, authority, structure) don’t change. Optimize for those, not the output format.

Over-optimizing for one engine. We had a period where we focused heavily on ChatGPT citation structures and neglected Perplexity’s preference for numbered list formats. Perplexity SEO (the practitioner shorthand for optimizing against Perplexity specifically) has different rules than ChatGPT or Google AI Overview, and writing only for one engine leaves citations on the table elsewhere. Both matter. The structures that work for Google AI Overview also work for most other engines, but they’re not identical. The safest approach: write for clarity and specificity, and the citations follow across engines.

Burying the answer in the second paragraph. This sounds obvious but it’s the most common mistake. Every experienced writer has an instinct to establish context before answering. AI systems don’t wait for context. They pull the first extractable sentence in a section. If that sentence is “Before we answer this, let’s understand the background,” you’re not getting cited.

Cost of AEO at SMB scale

These are numbers from Kaxo’s own content pipeline across 2025-2026, not industry estimates. Your mileage will vary by content quality, niche, and schema hygiene.

Content production per post: A practitioner-depth post (2,400-3,200 words, citations, real specifics) takes 4-6 hours of writing time at a loaded rate of $100-150/hour. All-in production cost per post: $400-900. Schema implementation is one-time template work: 2-3 hours to set up FAQPage schema in Hugo or WordPress, then zero marginal cost per post.

Ongoing measurement cost: Google Search Console is free. Manual citation checks cost time, not money (30 min/week). DataForSEO LLM Mentions monitoring for a 50-query set runs under $10/month. Total ongoing cost: $0-10/month for measurement infrastructure.

Realistic citation volume: Publishing 2 practitioner-depth posts per month, we see new AI Overview citations appearing within 2-4 weeks per post. After six months of consistent publishing, we have AI Overview citations on roughly 15-20 specific technical queries. The commercial-intent queries (consulting, services) have lower citation rates than informational queries but are growing.

ROI envelope: A cited post in Google AI Overview generates brand impressions on queries where we’d otherwise have zero presence. The value isn’t direct click traffic; it’s name recognition in AI-generated answers. For a B2B services firm, one inbound inquiry from a prospect who “saw Kaxo mentioned by ChatGPT” covers months of content production costs.

The agentic workflows guide has the detailed breakdown of AI automation cost structures for small businesses. The content investment math is similar.

Where AEO goes in 2026-2027

The trend lines are not subtle.

AI Overview is now on roughly half of informational queries in the US. That percentage will keep rising. Google has a structural incentive to serve AI-generated answers: users who get direct answers stay in the Google ecosystem longer. The blue-link percentage will shrink, slowly at first and then quickly.

More AI engines entering the market means more surfaces to appear on. Gemini, Claude, Perplexity, and ChatGPT all have different citation patterns, but all reward the same underlying content signals. A well-structured, authoritative, specific page earns citations across all of them.

Brand mention becomes the unit of value, what some practitioners are now calling AI visibility. Traditional SEO measures success by clicks and rankings. AEO measures success by citations and AI visibility across ChatGPT, Perplexity, and Google AI Overview. A business that gets cited in ChatGPT responses for their category earns brand awareness even when the user never clicks through. This is closer to PR than traditional SEO. The content budget should reflect that.

Content depth beats content velocity. The SEO-era playbook of 30 keyword-targeted posts per month is dead for most businesses. One practitioner post with real specifics earns more AEO value than ten thin posts optimized for keyword density. Reduce volume. Increase depth.

Canadian angle: what changes for Canadian businesses

Canadian searchers get the same AI Overview as US searchers. There’s no regional version of Google AI Overview that would give Canadian content special placement for Canadian queries. The AEO discipline is the same regardless of location.

Where Canadian businesses have an edge: local and bilingual queries. If your content covers topics specific to Canadian regulations, industries, or business contexts (PIPEDA, SR&ED, provincial tax treatment), you face less competition for AEO placement on those queries than on generic international topics. Our location-specific content, like AI consulting in Toronto , gets cited on local-intent queries where the AI has fewer high-quality Canadian sources to pull from.

One consideration: no special data residency issue for AEO content itself. AEO is about how you structure publicly available content. The question of where your content is hosted matters less than whether it’s accessible to AI crawlers. Most static sites hosted on Cloudflare Pages or similar infrastructure are fully crawlable and AEO-eligible. The data residency concerns that apply to customer data in agentic workflows don’t apply here.

Key Takeaways

- Answer engine optimization targets AI citations, not blue-link rankings. Both matter. The disciplines are complementary, not competing.

- AEO, LLMO, and GEO are the same concept with different names. Stop arguing about terminology and build the page structures.

- Every H2 section should open with a one-sentence direct answer. This is the single highest-impact structural change you can make.

- FAQPage schema with real questions (under 60 words each, matched to actual user queries) is the most direct route to People Also Ask placement.

- Measurement is simple: Google Search Console (free), weekly manual citation checks, optional DataForSEO LLM monitoring for targeted queries.

- A practitioner post with real specifics costs $400-900 to produce and earns AI citations within 2-4 weeks. The ROI math works at SMB scale.

- Content depth is the equalizer. Small businesses with specific, practitioner-grade content outperform enterprise sites with generic coverage on AEO metrics.

FAQ

What is answer engine optimization?

Answer engine optimization (AEO) is the practice of structuring content so AI systems like Google AI Overview, ChatGPT, and Perplexity cite it when answering user queries. It focuses on earning citations and direct-answer placements, not just blue-link rankings. The core technique: lead every section with a one-sentence direct answer an AI can extract verbatim.

How is AEO different from SEO?

SEO optimizes for ranking in blue-link search results. AEO optimizes for being cited by AI answer engines. Both matter. SEO targets PageRank signals; AEO targets citation signals: direct-answer lead sentences, specific factual claims with dollar figures and dates, FAQ schema, and clear authority markers. You can rank well in aeo vs seo terms on one metric while failing on the other.

Is SEO dead because of AEO?

No. As of April 2026, blue-link clicks still drive the majority of search-originated traffic. AI Overview appears on roughly 50% of informational queries in the US, but many searches still return standard results. SEO and AEO are complementary disciplines. Run both.

Which answer engines matter most in 2026?

Google AI Overview is the highest-volume surface because it appears inline on Google Search. ChatGPT has the largest installed base among dedicated AI assistants. Perplexity is growing fastest in the research-intent segment. Gemini is significant for Google Workspace users. Claude matters in technical and professional contexts.

What schema markup helps with AEO?

FAQPage schema is the highest-impact AEO markup. It puts your question-answer pairs directly into Google’s structured data pipeline, increasing the probability of People Also Ask placement and AI Overview citation. Article schema establishes publication date and authorship. BreadcrumbList helps with page hierarchy signals. See Schema.org’s FAQPage reference for implementation details.

How long does AEO take to show results?

Faster than traditional SEO. We’ve seen AI Overview citations appear within 2-4 weeks of publishing a well-structured post. The mechanism is different: AI systems re-crawl and re-evaluate sources continuously. A post that earns one citation often earns more as engines gain confidence in the source.

Can small businesses compete on AEO against enterprise sites?

Yes. AI answer engines weight specificity and direct answers more than domain authority. A practitioner post with real numbers, named tools, and dated observations beats a generic enterprise blog post. We have AI Overview citations on niche technical topics where our domain authority is minimal. Specificity is the equalizer.

Want an independent review of your AI stack?

If you are evaluating AI tools or platforms and want a structured review of fit, ROI, and implementation order before committing, see our AI Tools Audit service . Independent, Canadian, no vendor referral fees.

If you want help building an AEO-optimized content pipeline for your business, get in touch at kaxo.io/#contact . We scope the content structure, implement the schema, and set up the measurement layer.

For a deeper look at how AI agents use content in their workflows, see our agentic AI consulting services .

Soli Deo Gloria

Back to Insights